Key takeaways

- Learning tool success depends more on implementation than on the technology itself.

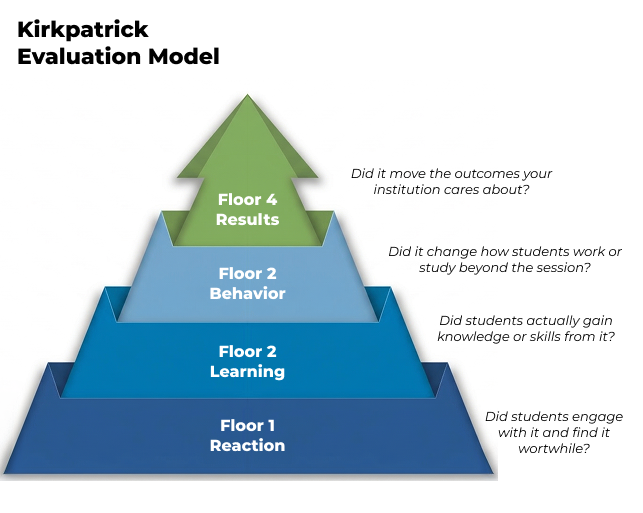

- The Kirkpatrick model organises evaluation into four levels: reaction, learning, behaviour, and outcomes. Each is harder to set up and measure than the last.

- An academic study applied all four levels to Kahoot! sessions in medical education and found positive results at each one, including a statistically significant improvement in exam scores.

- The framework is practical. Any program lead can use it to define success before a tool goes live and make sure tools deliver on the goals they were chosen for.

You chose a digital tool for your program for a reason – perhaps more student engagement, collaboration, or better knowledge retention. The question is, how can you make sure that hope translates into practice?

Most tools don’t fail because the technology is wrong. They fail because the implementation doesn’t create the conditions for them to work, and because nobody defined in advance what “working” would actually look like. Without that, there’s no way to know whether you’re getting what you paid for, or just getting students to show up and feel good about it. While this is important at a time when many organizations are struggling with student attendance and participation, educational technology can also support learning in so many other ways.

A research team at National University of Sciences and Technology (NUST) in Pakistan recently published a study that points toward a better approach. They introduced collaborative Kahoot! sessions into the cardiovascular module of a first-year medical program: 101 students, 30 teams, questions calibrated from basic recall through to application. Then they measured whether it worked at four distinct levels, using a framework called the Kirkpatrick model. They found positive results at every level.

What’s worth borrowing isn’t the study design. It’s the habit of thinking in four levels when checking whether the tool is working.

Four levels, each one harder than the last and each one worth setting up deliberately.

Think of these as floors in a building. Most implementations never get past the ground floor, not because the upper floors are unreachable, but because nobody planned for them from the start.

The Kirkpatrick Evaluation model was developed by educator Donald Kirkpatrick in the 1950s, originally for corporate training. The digital learning era came later, but the four questions it asks remain just as relevant for anyone responsible for how people learn.

Floor 1: Will students actually engage with it?

Before you assess:

Design the experience to be genuinely engaging. In the study, questions were calibrated across cognitive levels (both recall and application) and sessions were structured around teams. That intentionality showed up in results: 100% attendance. This session was the only one that day to achieve full attendance. Over 78% of students reported increased motivation.

What to watch for: Signs like voluntary participation and student feedback. Whether they talk about it outside the session or perhaps ask to use the tool again.

What to watch out for: Don’t mistake high satisfaction for high learning. A session can be enjoyable, but not move the needle on learning outcomes.

Floor 2: Are they actually learning something?

Before you assess:

Build a baseline like a short pre-assessment. It doesn’t need to be formal, it just needs to exist so you have something to compare against. Over 82% of students in the NUST study reported increased knowledge, supported by faculty observations of genuine peer discussion and reasoning during sessions.

What to watch for: Evidence that students can do or explain something they couldn’t before. Self-reported gains are a useful signal, but compare against something observable.

What to watch out for: Don’t rely only on self-reported learning. How students feel about their progress needs to sit alongside another type of assessment.

Floor 3: Does it change how the students apply what they’ve learned?

Before you assess:

This is the floor most implementations skip, and the hardest one to set up. Behaviour change means students doing something differently after the session, not just during it. A single gamified session is an event. A recurring format with team structures or peer accountability is an environment. For this floor to be measurable, you need to be watching for it from the start, which means telling faculty what to look for before the intervention begins, not after.

What to watch for: Changes in how students reason through problems or engage with material in other contexts. Do students who worked in peer teaching roles during sessions start asking better questions in tutorials? The NUST study noted exactly this kind of shift, with one faculty member observing that the sessions had reshaped their own understanding of the content.

What to watch out for: Don’t confuse in-session engagement with lasting behavioral change. A student can participate actively for fifty minutes, and go back to exactly the same habits the moment they leave.

Floor 4: Does it move outcomes you actually care about?

Before you assess:

Define the outcomes you’re trying to move and identify a comparison point before you start looking at results. A parallel module, a previous cohort or some other baseline assessment. In the NUST study, exam scores in the gamified module were nearly five percentage points higher than the control, a statistically significant result. This was also meaningful because it sat on top of three floors of supporting evidence.

What to watch for: Measurable differences in the outcomes your program actually cares about, such as grades, retention, or progression.

What to watch out for: Don’t expect clear results from a single pilot, and equally, don’t skip this floor entirely because measurement feels too hard.

You don’t need a research team. You need a plan.

The NUST study worked because they thought through all four levels before the sessions began, not to produce a research paper, but to run a good intervention.

Write down what success looks like at each floor before you start. Floor 1 data is usually already there: attendance records, session feedback forms, whether students showed up voluntarily. Floor 2 takes a baseline: a short quiz before the tool is introduced, so you have something real to compare against later. Floor 3 takes intention: asking faculty to note whether students are approaching problems differently in other sessions, or whether study group behaviour has shifted. Floor 4 takes a comparison point defined in advance: a parallel module, last year’s cohort, or a pre-intervention assessment score.

Your students are already telling you something, in attendance patterns, in how they ask questions, in whether anything changes after the session ends. The four floors are just a way of listening more deliberately.